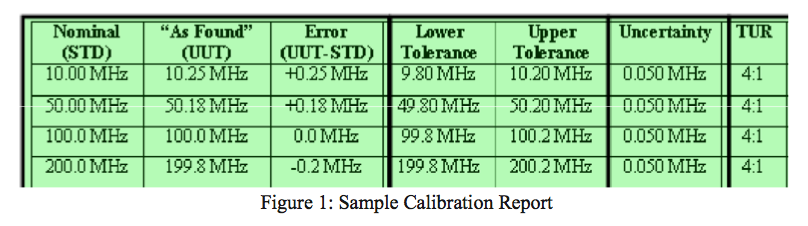

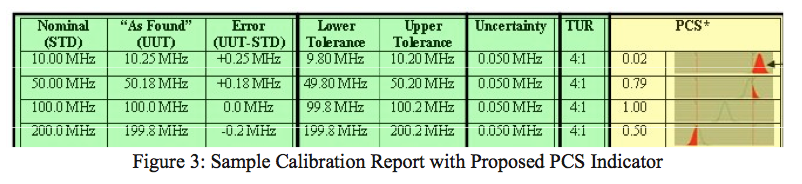

Finally, if the instrument’s tolerances are shown as well as the lab’s uncertainty for the measurement, then a ratio can be included as a general bias indicator (bias of the lab’s measuring uncertainty on the reported measurement).

What is calibration and why is it necessary?

A competent laboratory compares your instrument to a laboratory standard and quantifies the error in your instrument so that this error can be compared to the acceptable tolerances that indicate whether the instrument negatively influenced the application in which it was used during its most recent usage interval.

Let’s break down this statement:

- A competent laboratory compares your instrument to a laboratory standard.

- This quantifies the error that existed in your instrument while it was being used in your production or service processes.

- Your company used the instrument to make decisions about your production or service processes while the instrument was in service (i.e., during its calibration interval or usage cycle).

- If this error exceeded predetermined acceptable tolerances, then this indicates when an impact analysis is needed.

- An impact analysis determines if the instrument negatively influenced the application in which it was used during its most recent usage interval.

- By dissecting the separate parts of this statement, you can conclude if your current calibration processes (internal or outsourced) are helping or hindering you to minimize risk in your pursuit of making a good product or delivering a good service.

Laboratory Competence

It all starts with a competent laboratory. Does accreditation guarantee competence or consistency? How do you tell whether a laboratory is competent?

This is the premise that underlies ISO/IEC 17025: General Requirements for the Competence of Testing and Calibration Laboratories. The accreditation process under this standard requires an unbiased accreditation body to assess the laboratory’s quality system (section 4, which mirrors ISO 9001) and the laboratory’s technical competence (covered under section 5 of the standard). The result of this accreditation audit is to identify the laboratory’s capabilities, stated in their scope of accreditation.

A scope of accreditation is a listing of each measurement parameter that the lab can perform, indicating the lab’s Best Measurement Capability (BMC) for each parameter. Bottom line: the ISO 17025 accreditation process authenticates the laboratory’s measuring capabilities. It ensures that the lab knows how to identify their uncertainty of measurement when performing calibrations on your instruments.

This measurement uncertainty is the validation portion of a calibration that identifies whether the lab can identify the error in your instrument. So, you can tell whether a lab is competent by looking at their ISO 17025 certificate and scope of accreditation. But, more importantly, you can specifically tell whether a lab can identify the error in your instrument by comparing the tolerance of your instrument to the lab’s measurement uncertainty for any reported reading. This comparison is usually calculated as a ratio.

This ratio has a name: Test Uncertainty Ratio, or TUR. Traditionally, a 4:1 TUR (or higher) is accepted as a good ratio. A 4:1 ratio indicates that the laboratory has a measurement capability that is 4 times better than the measurement on which they are reporting.

While we’re on the subject about TUR, you should know that there is an outdated ratio that is still in use in some companies today. This ratio is the Test Accuracy Ratio or TAR. This ratio makes a comparison between the tolerance of your instrument and the accuracy of the standard that was used for the calibration. The reason this is outdated is that it does not consider all the factors that go into making a measurement. It only considers one factor: the estimate of error in the laboratory’s standard. It does not speak to any other errors, which are an important part of determining whether a lab is competent in making a measurement. Therefore, the TAR indicator is incomplete and has been superseded by TUR.

Many of the cal labs that still use TAR do so because they are not accredited or do not understand how to calculate their measurement uncertainty. But, a laboratory without measurement uncertainty is a laboratory whose measurements cannot be validated. Without a means of authenticating a laboratory’s measurement capabilities, how can they prove that they are competent to report calibration errors for the instruments they calibrate?

Is your calibration supplier accredited? What about your internal calibration department? Do they know the uncertainty of their measurements? How do your calibration providers (internal or external) prove their competence in reporting the errors for the calibrations they perform?

Instrument Error

Once you are certain that you are using a competent laboratory to report the results of your calibrations, you can be more confident that the reported errors are indeed correct. This ensures that you are neither wasting time performing impact studies that might not have been necessary nor are you unaware of a situation where an impact study was needed but you were not informed. Either of these situations can be very costly: the former because of wasted resources (also known as a “false reject” situation) and the latter because of potentially bad consequences due to lack of impact analysis (also known as a “false accept” situation).

Decision Process

The reason you should be interested in the results of your calibrations is because someone in your organization (the person or people using the instrument – perhaps you) is making decisions about the process in which the instrument is used. These decisions are based on the values that the instrument is presenting to them. If these values are incorrect, either because the instrument is being used incorrectly or due to the error in the instrument, then the decisions are also adversely affected about the product or service being rendered.

For example, if during the instrument’s normal use in your facility, the user believes that the process is out of control or exceeds a limit of some sort due to the instrument’s indication – and the indication is incorrect because the instrument has drifted beyond its allowable tolerances, then a bad decision has been made, followed by an unnecessary corrective action.

This unnecessary corrective action may move a process from an in-control status to an out-of-control status, due to the unknown error of the instrument on which the decision had been made. Upon return from calibration, this error would be identified on the calibration report and an impact study on the processes where the instrument had been used since its last calibration would identify where this error affected decisions made about these processes in that period.

If you are not performing impact studies or not performing thorough impact studies, then you are susceptible to bad consequences. Quite honestly, if you’re not going to use your calibration data, then there is no point in calibrating your instruments. But you do not want the risks that go with errors in measurement, so calibrate your instruments and use the results to minimize that risk!

Acceptable Error

You need to be aware of the many assumptions that can influence the expected performance of your instrument.

- What are your expectations for the performance of your instrument?

- Are your expectations the same as everyone else in your organization?

- Who determines what is expected of the instrument while it is in use?

- A quality engineer? A manufacturing engineer? A technician or production line worker using the instrument to perform daily work?

- How long will the instrument be in use before it is recalibrated?

- What are the tolerances that indicate how far the instrument can drift before it adversely affects the decisions that are being made as you rely on the values the instrument indicates?

While these questions encroach upon the other parts of the original statement, they are necessary to illustrate a point about instrument error. If it is possible for people within your organization to interpret the acceptable tolerance of an instrument differently, then what interpretation is your calibration provider applying to your instruments?

Even if you are using a competent laboratory, there is no international standard that governs the tolerances of an instrument. ISO 17025 states only that the laboratory must understand their client’s needs for calibration.

Likewise, it is a good idea that you understand how your calibration provider applies tolerances to the instrument. This is important because, whether you look at each individual calibration result, if the tolerances on the report do not match your expected tolerance limits, then the instrument will not be flagged as “Out of Tolerance” when it should; alternately it could be flagged “Out of Tolerance” when it should not.

There are many reasons why your expectation and the lab’s reported tolerances might not match. Among them are:

- Multiple sources for accuracy specifications reported by the manufacturer.

- Complex specifications interpreted incorrectly.

- Manufacturer’s specifications expected by the instrument user but different specifications used by the lab.

- Manufacturer’s specifications used by the lab but different specification expected by the instrument user.

- Varying methodology in combining multi-part specifications.

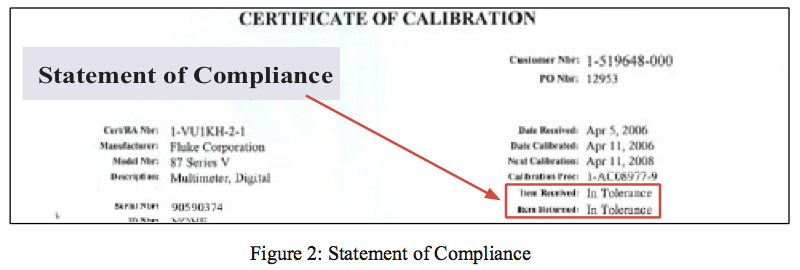

From this, you can see that it is very important to communicate your expectations of instrument tolerances to your calibration provider so that you can rely upon the statement of compliance and any associated tolerance conditions to correctly flag when you need to perform an impact analysis (and when you don’t). This conversation may result in identifying when the laboratory is calculating the spec differently than you. One or both of you has made an incorrect assumption about the accuracy or in converting the accuracy to a tolerance limit.

The point here is not to place blame when a mismatch occurs, but rather to identify that there is a mismatch and correct it prior to the calibration being performed, to preserve the intent of the process – that is, to identify when the instrument exceeds the tolerances that indicate an impact study is needed. If you don’t assure this intent has been preserved, then the calibration may become useless, again causing your organization unnecessary costs.

Impact Analysis

The reason to perform an impact analysis is that this is the final step in minimizing risk in your measurements. Again, recall that someone is making decisions about your processes based on values that are taken from your measuring instruments. You’ve predetermined that an instrument can drift some allowable amount before it potentially impacts the quality of your product or service. If your calibration report indicates an Out of Tolerance condition, you will need to follow through with an impact analysis to understand how much this error affected your product/service or decisions made about your product/service. This step closes the loop in using instrumentation to help control the quality of your product/service.

When to Perform an Impact Study

Transcat, as your Trusted Calibration Professional, has gone the extra mile in understanding our clients’ need to make sense of calibration results. We have done research in the quest to find a means of reporting results that take the complexity out of understanding all the details on a calibration report.

One area of risk that only the most discriminating clients understand is that even competent laboratories can end up with situations where they are not certain about the result of a measurement. This occurs when your instrument has drifted sufficiently close to one end of its tolerance limit, so that the uncertainty of the measurement clouds the lab’s ability to state clearly whether the instrument is In Tolerance or Out of Tolerance – even if it appears that the instrument is In Tolerance to the average person.

When this situation occurs, it is important for you to understand how the instrument reading, the instrument tolerance, and the lab’s uncertainty come into play. Here, the TUR (or any ratio indicator) does not indicate that risk is present and is therefore rendered as useless.

Although it is not the norm, this situation can occur even if the lab has a capability that is 100 times better than the measurement on which they are reporting. So, rather than educating Transcat’s 8000+ clients on how to interpret all of this information from the calibration report, we have recently developed a means of normalizing all of this data into one figure that anyone could use to identify when risk is present and when it is not. We call this the Probability of Compliance to the Specification, or PCS indicator (see fig. 3).

Although not yet in use on our certificates, the PCS will indicate when to perform an impact study, as well as when this is not necessary. The simplicity of this indicator is that any value less than 1.00 (or it could be scaled to a value of 100, as in a grading system) indicates that the instrument’s reading is in question, because the lab’s measurement capability is imposing on the tolerance limit. Either way, a value less than 1.00 means it is important for you to compare the instrument reading against the process in which it was used.