What is mutual recognition?

Mutual Recognition Arrangements (MRAs) are documented systems whereby a consortium of participating countries – through consensus, evaluation, and validation of each other’s processes – provide confidence and correctness in each participant’s accreditation activities. Another aspect of these MRAs is that each participant agrees to accept accreditation by the other participant’s organizations at the same level as their own. Participant requirements include:

- Operate to ISO/IEC Guide 58 (17011)

- Use ISO/IEC 17025 as minimum criteria

- Undergo peer evaluation

- Become signatories to regional and international Mutual Recognition Arrangements

MRA guidelines include:

- International proficiency testing

- Use of consensus methods

- Uniform interpretation of standards

- Agreed-upon criteria for traceable chains of measurements

A brief (recent) history of calibration

Calibration has been with us throughout the ages in one form or another. Back in Egyptian times there was a need to standardize measurements. This was a necessity for trade and architecture. can you imagine building a pyramid with varying sizes of blocks? Through the ages, standardization and frequency of measurements have increased, as well as the ability to realize measurements to a defined standard.

Currently, measurements span many disciplines, with multiple industries affected. These include automotive, defense, computers, pharmaceuticals, aeronautics, petroleum, construction, meteorological and many more. It’s hard to find an area in our lives that does not include measurements. Even daily consumer purchases are part of this process – look at the numbers on the gas pumps to ensure that when the pump says a gallon, that’s what you’ve received, not a half gallon or a gallon and a half.

Twenty-five years ago, calibration was primarily provided by national laboratories, original equipment manufacturers (OEMs) of test equipment and the U.S. military. There were few third-party calibration suppliers and internal calibration programs. civilian providers of calibration services typically lagged far behind their armed forces counterparts. There were some very good programs in the civilian sector, but they were few and far between. There were many reasons for this, and foremost on this list was education. There were also many consequences. It became apparent that if you did not standardize your measurement processes, your product would be incompatible with products from suppliers that did. Typical results included high failure rates and poor quality. This would appear to be acceptable to many industries. Although others, such as the military and its suppliers, could not afford this and therefore implemented measurement programs.

This pattern remained the same until the late 1980s with the introduction of ISO 9000 series of quality standards. These standards stated the requirement that all equipment used to determine product conformance be calibrated to a known tolerance and be traceable to a national or international standard, or physical constant. They also noted the requirement that calibrations be performed by trained and knowledgeable individuals, using documented procedures, and that corrective action be documented in cases where an instrument is out of tolerance.

Although ISO 9000 imposed requirements for calibration on instrument users, it did not define requirements for calibration suppliers. Instead, it allowed companies to implement their own selection criteria for suppliers, including suppliers of calibration services.

With the release of the automotive industry’s QS-9000, the criteria were better defined in that calibrations performed by outside suppliers had to be compliant with ISO/IEC 17025 or equivalent, or could be performed by the OEM. In-house calibrations were to be compliant with the same requirements; however, they were subject only to QS-9000 level audits.

With this new requirement for calibration on a large scale, third-party calibration laboratories multiplied. As they multiplied and competition increased, the quality processes decreased. These decreases in quality included less experienced personnel performing calibrations, shortcuts in traceability, extended calibration cycles of standards without justification, abbreviated calibration of instruments to shorten calibration times, etc. It’s no surprise that competition without quality directives or regulation equals a poor quality product. And with calibration, unlike many other purchases, there is no easy way to tell what you have received. After all, if you order widget A, that is to be blue and 2" x 3" to fit in slot B on widget c, you can tell by plugging it in if it’s correctly manufactured.

But how do you ascertain a quality calibration? There are ways, but they are relatively costly and time restrictive. One way is to set up an internal calibration laboratory, on a small scale, to verify supplier quality. Even on a small scale this option is costly and strays from core competence. Another way would be to take a sampling of completed calibration instruments from supplier A and do a correlation study with supplier B or the OEM. Again, this option is somewhat costly, though not as costly as the first option, but it is also time consuming. calibration by OEMs is another option. Although they generally provide a thorough and quality calibration, turnaround time can be as long as eight weeks.

Now that we understand the problems with quality calibration, let’s look at one possible solution.

Accreditation to ISO/IEC 17025

ISO/IEC 17025 is not a guideline but rather an international standard. It contains the same essential elements for competence of calibration and testing labs and includes the general quality system requirements of ISO 9000. There are many elements to this document, but let’s discuss the technical requirements. These are the elements that primarily ensure the correctness of the measurement process.

Technical requirements

General Requirements

ISO 17025 states that a laboratory must consider all factors that may contribute to errors in the calibration process. These include:

- Personnel

- Accommodation and environmental conditions

- Methods and validation

- Equipment

- Measurement traceability

- Sampling

- Handling

Personnel Requirements

As the field of metrology is diverse, including measurements from magnetism to light (luminance) to dimensional tools, personnel training is an important topic. Let’s look at how the U.S. military approaches training for metrology. First, the selection of personnel requires high scores in both electrical and mechanical aptitude entry tests. The school’s curriculum involves four months of in-depth electronic fundamentals and six to seven months of specific metrology training including basic measurement techniques, Dc/low frequency, signal generation and measurement, and high-end Dc/low frequency measurements, just to name a few. After completion of formal training, personnel are given both On the Job Training (OJT) and written correspondence to ease them into the field. Typically, training goes on six months to one year after completion of the formal training, before personnel are considered proficient at certain tasks. After this period, training continues for specific metrology disciplines throughout an individual’s career.

This is an outstanding training program and meets the needs of the measurement tasks at hand. The level of proficiency in the civilian sector must also be adequate. Generally, you will find that most calibration laboratories employ military trained personnel. Presently, the military has limited its training of metrology personnel, and there is limited, non-centralized, formal training available in the civilian sector. This is creating a serious problem. The need for quality calibrations and the experienced personnel to perform them is increasing, while the availability of qualified personnel is decreasing.

There is hope. currently, there is a program available to assist in filling this void. This program was developed through the American society for Quality (ASQ) Measurement Quality Division (MQD), and is called the certified calibration Technician (CCT) program. As with the other ASQ certifications, this program sets objective criteria for certification and the attendant body of knowledge needed, which in turn drives the initiative for formal training in the civilian sector. Additionally, the National conference of standards Laboratories (NCSLI) has commissioned a subcommitee on education, whose charter is to increase awareness of Metrology as a career option and to develop an education program that will feed the industry with trained calibration technicians.

Accommodations & Environmental Conditions

Temperature has influence on many disciplines of metrology and should be addressed to the level of calibrations. For example, control of ambient temperature to ±1°F during calibration of gage blocks is unacceptable. This is due to the expansion coefficient of the material of the gage blocks (6.4µ inch/°F/inch for steel) and the uncertainty of the gage block (+2/-4µ inch) for a grade 2 gage block. control of ±1°F would be acceptable for calibration of a micrometer with accuracy of 100µ inch. Another example would be standard Manganin Resistors, which have an approximate error of 16 ppm/°c. Obviously, to get resistance uncertainties to 1 ppm you will need better temperature control than 0.1°c.

Humidity requirements are much less stringent. Primarily, concerns are that high humidity will cause corrosion of most metal parts and low humidity will cause static electricity problems.

Vibration requirements would be a concern in optics and mass measurements.

Gravitational/barometric pressure requirements would have a large influence in pressure and force measurements, and in primary resistor measurements.

Contaminants affect all disciplines of metrology from particulates in mass measurements, to contamination of test leads/equipment connectors for resistance measurements, to filters for electronic test equipment. clean, clean, clean is the directive.

Methods & Validation

Procedures must include steps to ensure the necessary components of error or uncertainty are considered for the level of the calibration. certainly, the calibration technician needs to understand how the uncertainty of the measurement relates to the tolerance of the measurement. This is known as Test Uncertainty Ratio (TUR). The lower the TUR in a calibration process, the greater the risk that a good measurement is Out-Of-Tolerance (OOT) or that an OOT condition is actually In-Tolerance. Validation must be done on non-standard methods.

Uncertainty is big word that means a great deal to a measurement process, but is not as difficult as one may think. In a nutshell, if I state that I can make a 1 volt measurement with an uncertainty of 10 micro volts (1V ± 10µV), when I make that 1 volt measurement, the actual value could be 0.999990 to 1.000010 volts (to a 95% confidence level).

Typically, uncertainties are stated at a 95% confidence level, or 2 sigma, throughout the measurement community. What this means is that 95% of the measurements will fall within the stated uncertainty (in this case, 1V ± 10µV). If the tolerance were stated with a 99.7% confidence level, or 3 sigma, 99.7% of the measurements will fall within the stated uncertainty (i.e.,1V ±15µV). All of this is based on proven statistical formulas.

The problem is identifying the components of uncertainty; we cannot use only the manufacturers’ specifications for the instrument. These are generally stability components from the manufacturer and do not include calibration uncertainties, temperature components, personnel components, environmental components, etc.

If you think this is all overkill, start performing repeatability tests. You may improve your uncertainties.

Equipment

Is your equipment in good operational condition? Has proper maintenance been performed on it to ensure its continuing capability to meet the desired measurement parameters?

This is where you can really decrease your stability component or increase time between calibrations. If you have an instrument that is specified at 0.1% for one year and you have ten calibration iterations or more of this instrument with a standard deviation of < 0.05%, then you may use this value as a type A component to decrease the stability specification of this instrument. If you use less than 30 iterations then you must apply the students’ T.

On any type of repeatability it is recommended to take ten or more measurements. This would keep your k value at 2.15 or less. Another option with this data is to extend the calibration cycle to eventually arrive at the true length of time between calibrations to justify this calibration interval.

The opposite applies for the situation in which your standard repeatedly, or intermittently, fails calibration (i.e., it does not have a good track record). You may have to increase the stability component or decrease the calibration interval, or replace the standard altogether.

Measurement Traceability

Derived from A2LA’s Measurement-Traceability Policy. Traceability is characterized by six essential elements:

- An unbroken chain of comparison: Traceability begins with an unbroken chain of comparison originating at national, international or intrinsic standards of measurement, and ends with the working reference standards of a given metrology laboratory.

- Measurement uncertainty: The measurement uncertainty for each step in the traceability chain must be calculated according to defined methods and must be stated at each step of the chain so that an overall uncertainty for the whole chain can be calculated.

- Documentation: Each step in the chain must be performed according to documented and generally acknowledged procedures, and the results must be documented in a calibration or test report.

- Competence: The laboratories or bodies performing one or more steps in the chain must supply evidence of technical competence, e.g., by demonstrating that they are accredited by a recognized accreditation body.

- Reference to SI units: Where possible, the primary national, international or intrinsic standards must be primary standards for realization of the sI units.

- Recalibration: calibrations must be repeated at appropriate intervals in such a manner that traceability of the standard is preserved.

Sampling

The laboratory’s internal procedures must include an adequate, documented sampling of finished product to demonstrate that the stated uncertainties are obtainable on a repeated basis.

Handling

Proper handling of test equipment is necessary to ensure the integrity of calibration results once the calibration has been completed. This category also includes storage of calibrated instruments prior to shipment or between uses, adequate packaging for transportation, and methods to prevent inadvertent contamination.

Assuring the Quality of Test & Calibration Results

It is imperative to ascertain that your standards are performing to their levels of stated stabilities. This is accomplished through repeatability tests on a frequent basis. The frequency is dictated by the characteristics of the instrument in question. For example, the HP 3458A specifications are based on a 24-hour self-calibration. Obviously, this must be done on a daily basis. To go one step further, let’s apply a known excitation of equivalent uncertainties or better (1V ± 10 µV) on a weekly basis. After evaluation of this data, if a shift in values is observed, then the measurement process would be investigated in more depth to determine the cause of the shift. Less frequent checks, such as on a quarterly basis, can also be used. These checks should cover more than one data point with an instrument that has higher accuracies or lower uncertainties than the instrument under test. All tests should be documented.

Proficiency Tests

These must be accomplished to ascertain that you are performing to your stated levels of uncertainty. These tests should encompass all disciplines for which you are accredited. Typically, to accomplish one of these tests, you are sent an artifact in a specific discipline of measurement. You will then measure this artifact and state your uncertainty of measurement. You will then be evaluated on your ability to measure the said artifact within your stated level of uncertainty. These tests are monitored or conducted by an outside, independent organization, to guarantee the integrity of the results.

Reporting the Results

The reports must be clear, concise, and of value to the user measurements must be documented and reported to the customer upon request. Obviously, the calibration process involves a bit more than pushing buttons and turning knobs!

Auditing Process

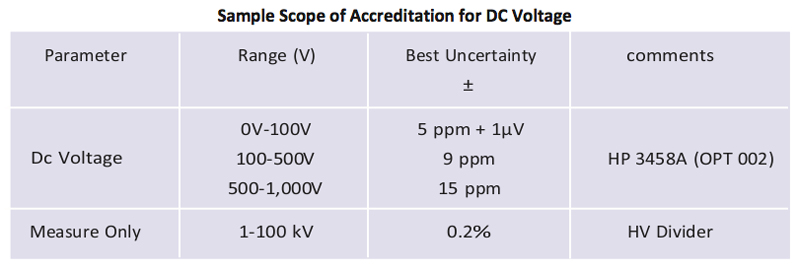

Laying out your scope consists of defining your capabilities by discipline and parameter, performing an uncertainty analysis or budget of each parameter, then stating your uncertainty for each parameter.

Audit

Your assigned auditors are experts in their fields of measurement. Their overall goal is to ascertain that your particular laboratory and its personnel can perform measurements to the stated levels of uncertainty listed on your scope of accreditation. The auditors accomplish this by evaluation of many factors. (These factors are listed earlier under Technical Requirements.) The auditors also evaluate factors concerning Management Requirements which can be found in ISO/IEC 17025.

Post-Audit

After completion of the on-site assessment and answering your corrective actions, the laboratory will be granted accreditation. The process is continuous. The laboratory is required to perform proficiency tests on a regular basis. The laboratory is also required to have a surveillance audit one year after the initial on-site assessment. This is necessary to ascertain that the laboratory is still operating under the same circumstances for which it was granted accreditation. The following year the initial accreditation process begins again.

What Benefits Does Accreditation Provide?

To the Laboratory

The laboratory will have to define and monitor its quality processes on a continuous basis to meet the guidelines of this standard. This aids the operation by being able to manage defined processes. A better quality process equates to fewer failures and reworks.

To the Customer

The overall benefits to the customer are at a much higher confidence level of the correctness in the customer’s measurement process and a subsequent quality increase in the final product. This will also drive a lower reject rate and drive down risk in the manufacturing process: risk that may not have been apparent with previous calibrations or service providers.

To the Measurement Community Conclusion

As more calibration laboratories become accredited, correlation between these accredited laboratories’ measurements will improve, thereby improving the overall measurement process.

Calibration is a multifaceted process that requires multiple guidelines to ensure integrity and correctness of the finished product. These guidelines should be incorporated into the quality process of any calibration laboratory. Accreditation assures this.

If a quality calibration is important to you, your criteria for selection of a calibration supplier should include the elements of 17025 as listed above. If you select a laboratory accredited to 17025, you may rest assured that this laboratory meets these requirements.

Is your current calibration supplier meeting these requirements?

About the Author

Keith Bennett has been in the field of metrology for over 26 years and is proficient in multiple disciplines within the field. Keith spent ten years in the United States Air Force (USAF) primarily working in the areas of physical/dimensional, primary Dc/low frequency, and RF/microwave. Keith’s career progressed from calibration Technician to Quality Process Evaluator, to Master Instructor for the USAF Metrology school, Precision Measurement Equipment Laboratories (PMEL).

After the military, Keith spent the next ten years working for Compaq computer corporation in their corporate metrology laboratory. Keith’s primary responsibilities at Compaq focused on analytical metrology, Automated Test and Evaluation (ATE) and championing manufacturing support teams tasked in the calibration of in-line equipment used in making printed circuit boards. While at Compaq, Keith also held the position of Radiation/Laser safety Officer.